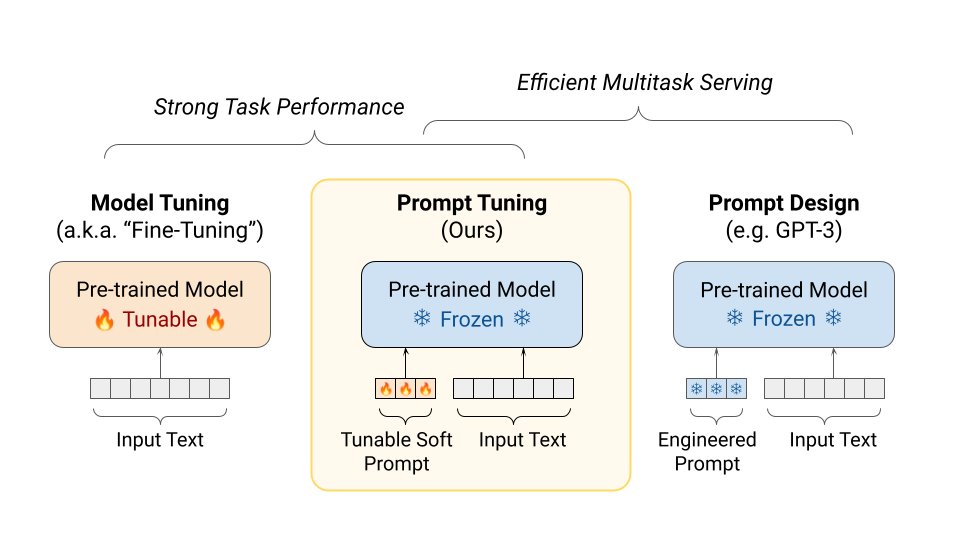

Google AI on X: "Fine-tuning pre-trained models is common in NLP, but forking the model for each task can be a burden. Prompt tuning adds a small set of learnable vectors to

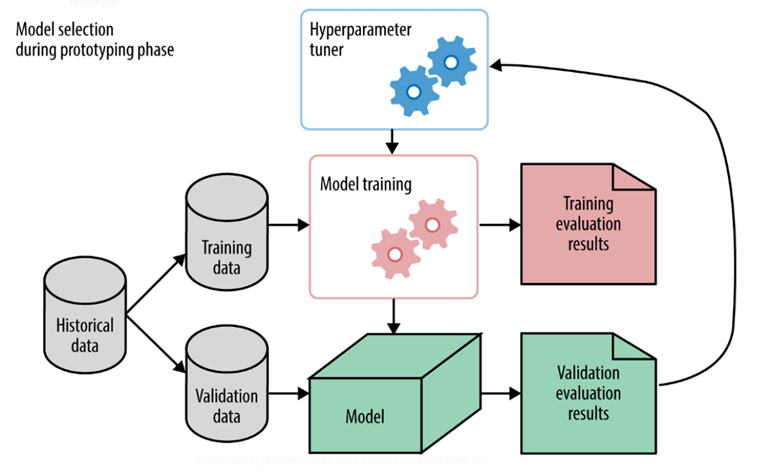

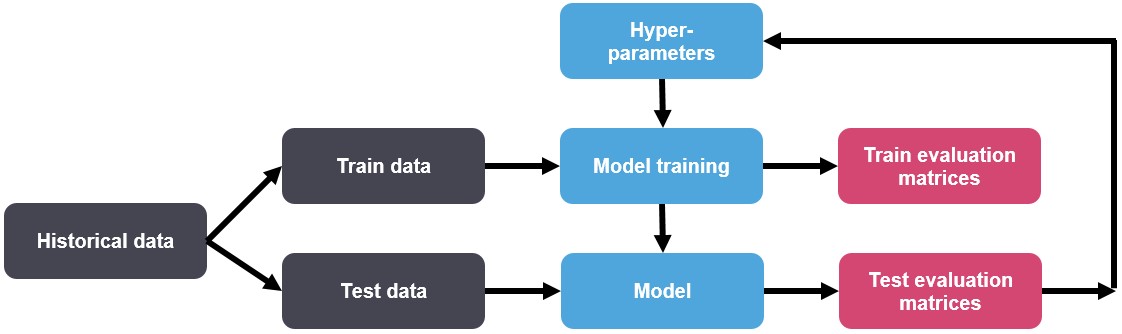

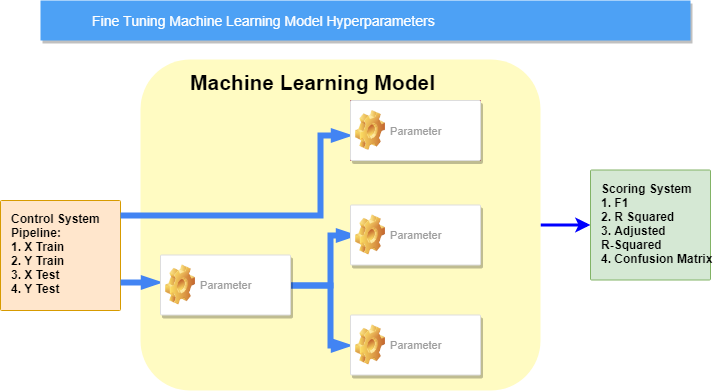

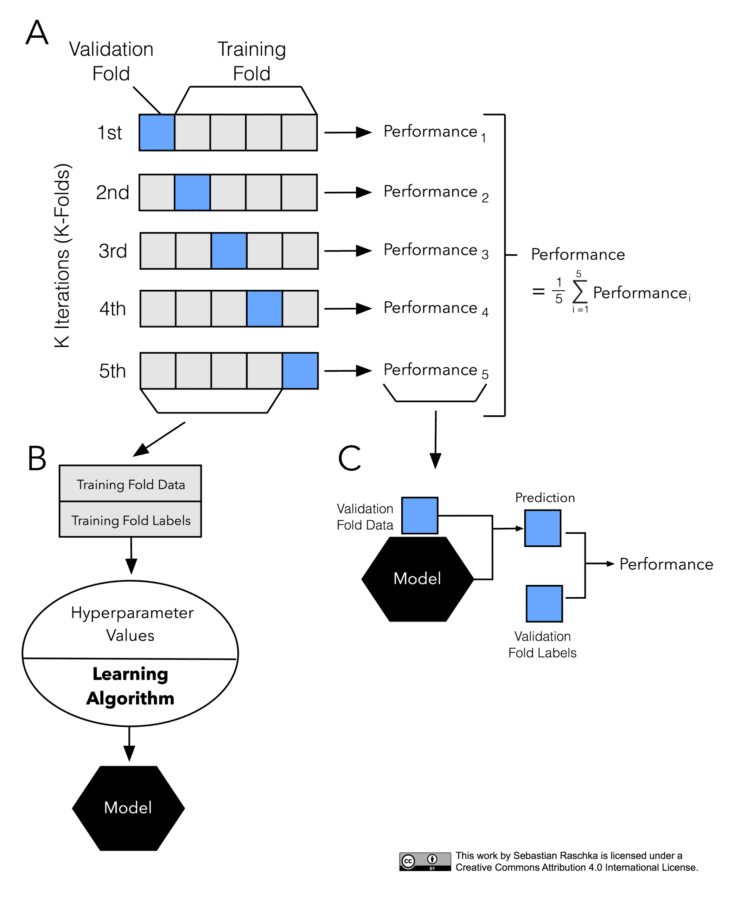

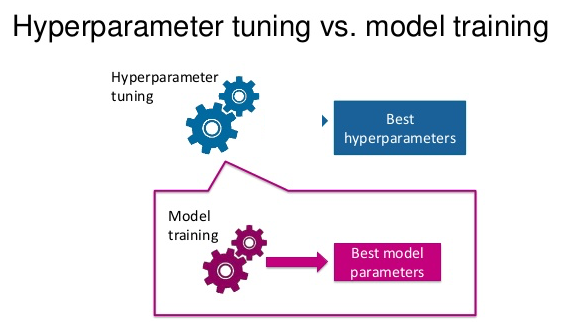

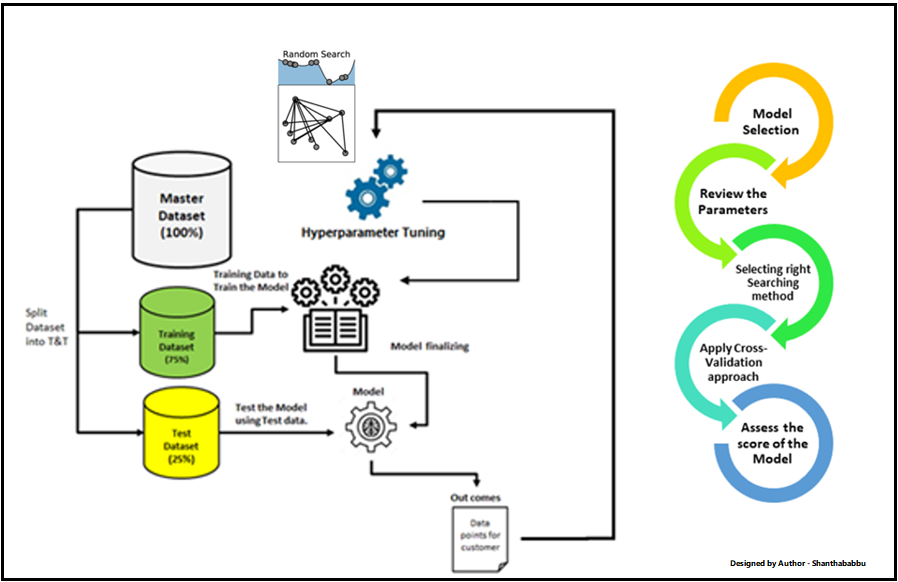

Understanding Hyperparameters and its Optimisation techniques | by Prabhu Raghav | Towards Data Science

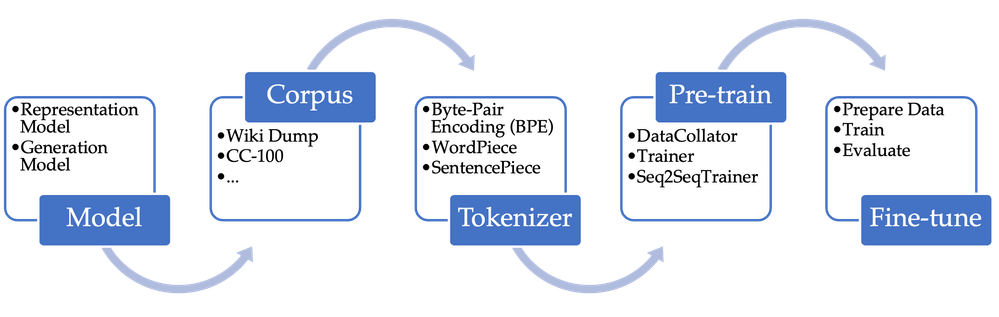

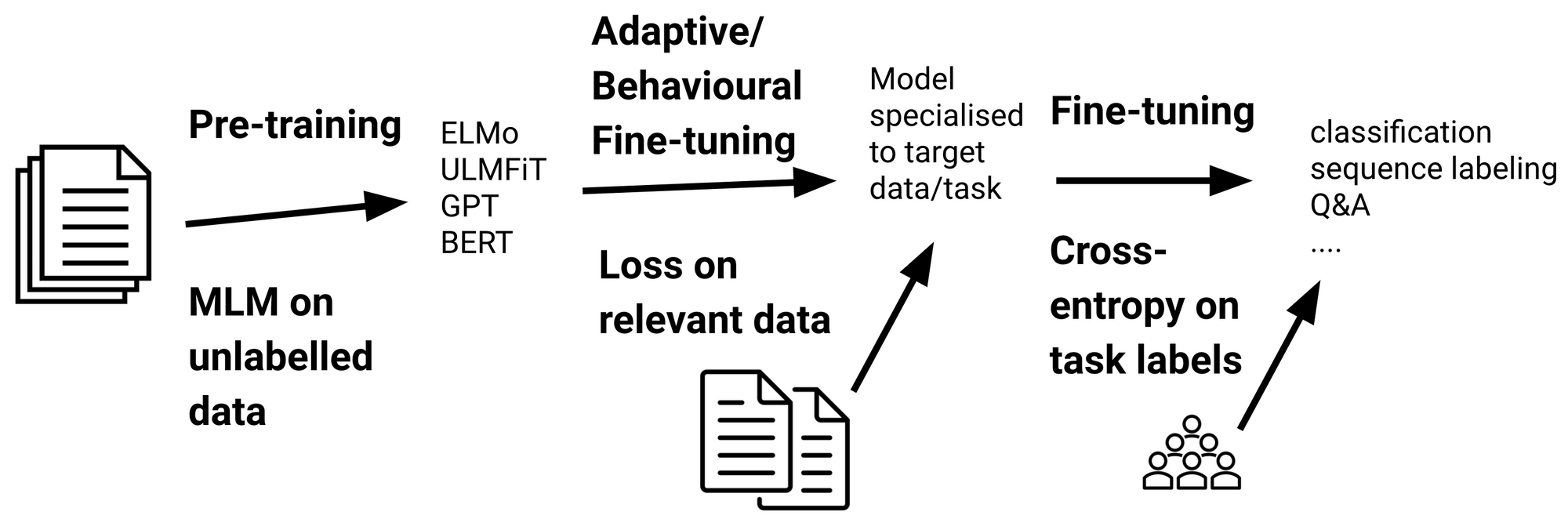

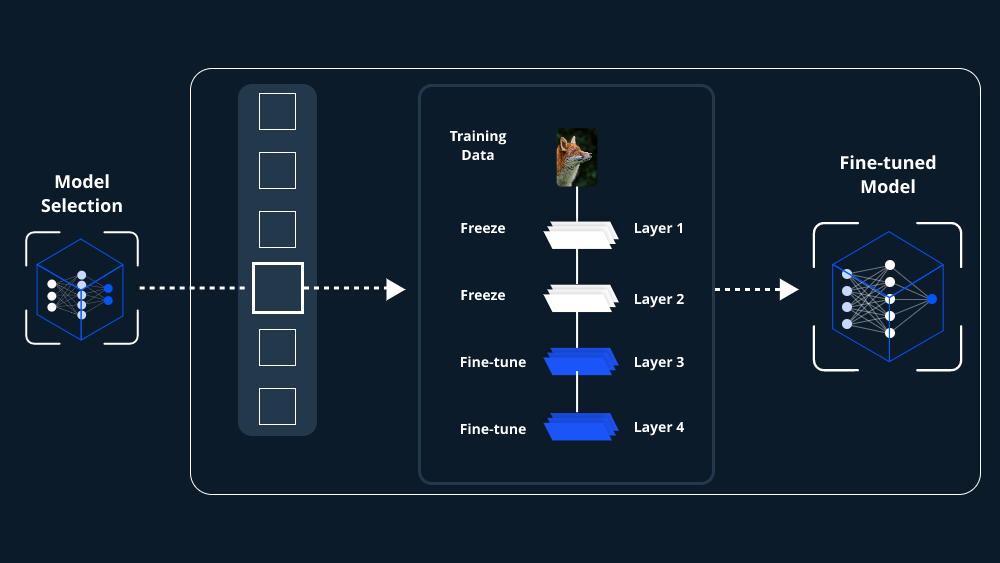

Fine-Tuning Pre-Trained Models: Unleashing the Power of Generative AI | by LeewayHertz | Product Coalition

:format(webp))